Gaming News

All our coverage of the latest video games, consoles and accessories.

Blizzard takes aim at Overwatch 2 console cheaters

Blizzard is targeting Overwatch 2 cheaters who use a keyboard and mouse on consoles to gain an advantage over controller users.

Blizzard takes aim at Overwatch 2 console cheaters

Blizzard is targeting Overwatch 2 cheaters who use a keyboard and mouse on consoles to gain an advantage over controller users.

It took 20 years for Children of the Sun to become an overnight success

Though it feels like Children of the Sun popped into existence over the span of two months, it took Rother a lifetime to get here.

Razer's Kishi Ultra gaming controller works with damn near everything, including some foldables

Razer just released the Kishi Ultra mobile gaming controller. It works with some foldable phones, in addition to Android devices, iPhones and iPads.

Cities: Skylines 2's embarrassed developers are giving away beachfront property for free

Cities: Skylines 2 developer Colossal Order is unlisting and refunding purchases of its controversial Beach Properties asset pack less than a month after its release.

Best Gaming Hardware

The 5 best mechanical keyboards for 2024

Here's everything you need to know before buying a mechanical keyboard, plus the best mechanical keyboards you can get right now.

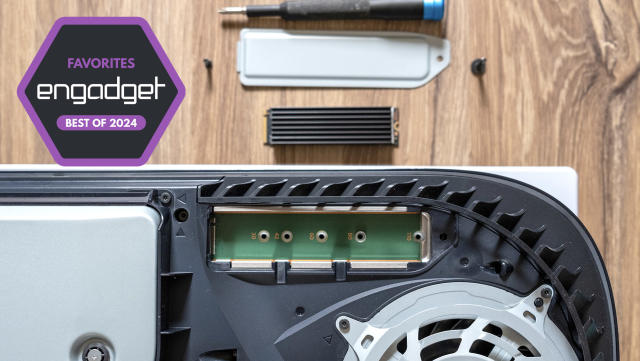

The best SSDs for PS5 in 2024

If you need more space to store games on your PS5, these are the best drives you can get.

The best gaming laptops for 2024

There's never been a better time to buy a gaming laptop. These are our top picks that we've tested and reviewed that will fit all kinds of use cases and budgets.

The best VR headsets for 2024

If you've been intrigued by virtual reality for a while, there are a number of solid headsets to consider. These are the best VR headsets we've tested and reviewed.

Latest

Blizzard takes aim at Overwatch 2 console cheaters

Blizzard is targeting Overwatch 2 cheaters who use a keyboard and mouse on consoles to gain an advantage over controller users.

It took 20 years for Children of the Sun to become an overnight success

Though it feels like Children of the Sun popped into existence over the span of two months, it took Rother a lifetime to get here.

Razer's Kishi Ultra gaming controller works with damn near everything, including some foldables

Razer just released the Kishi Ultra mobile gaming controller. It works with some foldable phones, in addition to Android devices, iPhones and iPads.

Cities: Skylines 2's embarrassed developers are giving away beachfront property for free

Cities: Skylines 2 developer Colossal Order is unlisting and refunding purchases of its controversial Beach Properties asset pack less than a month after its release.

9110091100

9110091100Analogue Duo review: A second chance for an underappreciated console

Analogue's most expensive console in years and its first system with a CD-ROM drive is the ultimate ode to a forgotten gem. It's not quite perfect, but it's a great addition to any serious game collector's arsenal.

The 5 best mechanical keyboards for 2024

Here's everything you need to know before buying a mechanical keyboard, plus the best mechanical keyboards you can get right now.

Playdate developers have made more than $500K in Catalog sales

Panic is celebrating Playdate's second birthday this month, and the party favors include some piping-hot statistics about Catalog game sales.

Supergiant shows off Hades II's gameplay and new god designs

Supergiant Games just treated Hades fans to an extensive look at the game's upcoming sequel.

Twitch is giving all users access to its discovery feed later this month

The feed will first appear as a new tab in the mobile app.

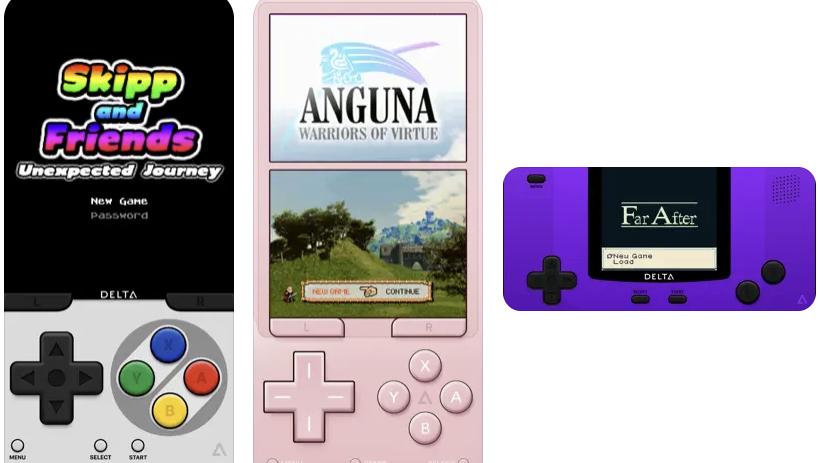

Nintendo emulator Delta hits the iOS App Store, no sideloading required

Delta (a successor to GBA4iOS) is one of the best-known Nintendo emulators on iOS. Now, you can download Delta for free from the App Store without having to sideload it.

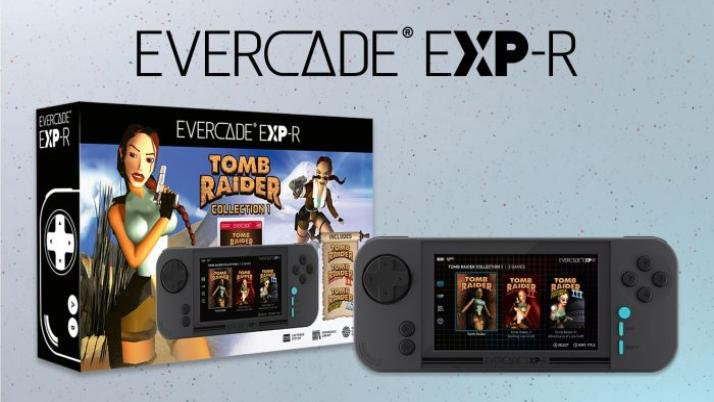

Cheaper Evercade retro consoles will arrive in July

Cheaper versions of Evercade's retro game consoles are on the way. The first three Tomb Raider games will be bundled with the EXP-R and VS-R.

Lorelei and the Laser Eyes, by Sayonara Wild Hearts devs, comes out on May 16

Lorelei and the Laser Eyes, by Sayonara Wild Hearts devs, releases on May 19. It’ll be available for the Switch and PC via Steam.

Yars Rising revives a 40-year-old Atari game as a modern metroidvania

Yars Rising is a forthcoming Metroidvania with an interesting pedigree. It’s a sequel to an Atari 2600 game from 1982.

Shadow platformer Schim is coming to PC and consoles on July 18

Schim is an indie platformer that sees you playing as a creature that moves by jumping between shadows. It's coming to PC and consoles on July 18.

8910089100

8910089100ASUS ROG Zephyrus G16 (2024) review: Not just for gamers

Like its smaller sibling, the ASUS ROG Zephyrus G16 combines a great display with a super sleek build but with better connectivity and even longer battery life.

Cozy cat sim Little Kitty, Big City arrives for consoles and PCs on May 9

The cozy cat sim Little Kitty, Big City releases for the Nintendo Switch and other consoles on May 9. Preorders are available now and it costs $25.

Watch Nintendo's Indie World stream here at 10AM ET

Watch the latest Nintendo Indie World stream here at 10AM ET.

The best SSDs for PS5 in 2024

If you need more space to store games on your PS5, these are the best drives you can get.

Take-Two plans to lay off 5 percent of its employees by the end of 2024

Take-Two Interactive plans to lay off 5 percent of its workforce, or about 600 employees, by the end of the year.

Nintendo’s next Indie World showcase is set for April 17

Nintendo has announced an Indie World showcase for April 17. Might it finally, at long last be time for Hollow Knight: Silksong news?